I just finished implementing Metropolis light transport (MLT) in my bidirectional path tracer. I will post more details later, but for now here's a very rough, quick summary and a few early test renders.

Rather than implementing Veach's path space MLT as I had originally planned, I decided to implement primary sample space MLT based on the

paper by Kelemen et al. This algorithm works by mutating points in the "primary sample space", the space of random numbers that drive a Monte Carlo renderer. Each point in this space maps to a particular path, and mutations in this space correspond to mutations in path space. The algorithm is very general because it works by manipulating the random number stream that drives the renderer rather than manipulating the paths themselves. Mutating paths directly can be relatively tricky.

A lot went into implementing MLT. I wrote a nice random number stream system, with lazy evaluation of random numbers and mutations. I made some significant modifications to my bidirectional path tracing core so that MLT could be added in an elegant way. I made a method to compute an approximate average scalar contribution for the entire image using a Halton sequence to distribute samples over the image (I use this when normalizing the results of the Metropolis sampling algorithm). And of course I implemented the Metropolis–Hastings algorithm.

In my renderer, bidirectional path tracing can now be run with or without MLT. Furthermore, I can continue adding features to the underlying bidirectional path tracer and they will automatically work with MLT enabled.

There are a number of optimizations that I plan to add to improve the rate of convergence, but for now I'm satisfied that the algorithm is working correctly and converges to the same results as regular bidirectional path tracing. MLT currently converges more slowly than bidirectional path tracing alone in most cases, but this should be fixed with a little more work. I also want to do some more testing.

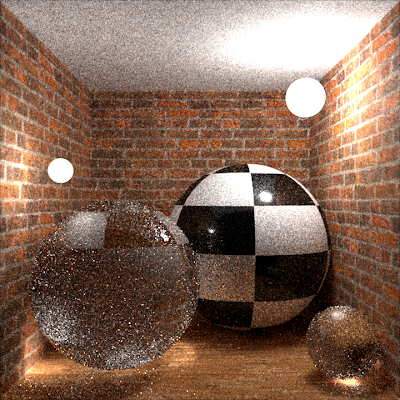

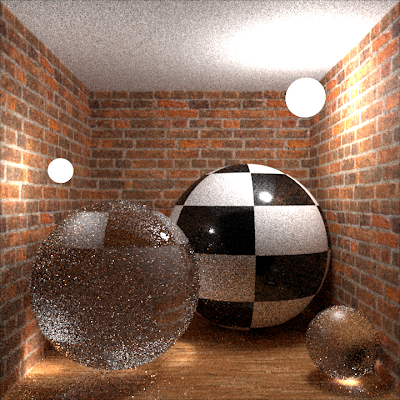

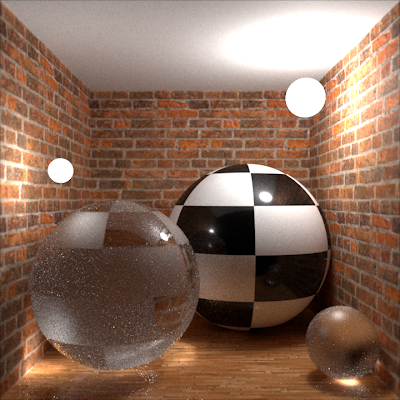

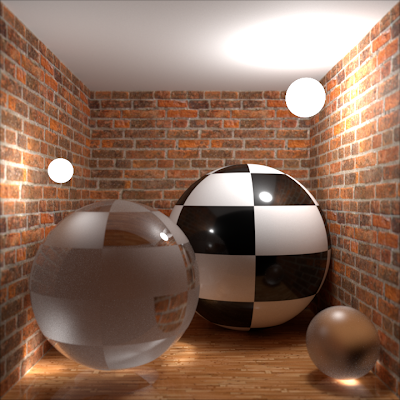

Here are some early test renders. Click the images to view them at full size.

|

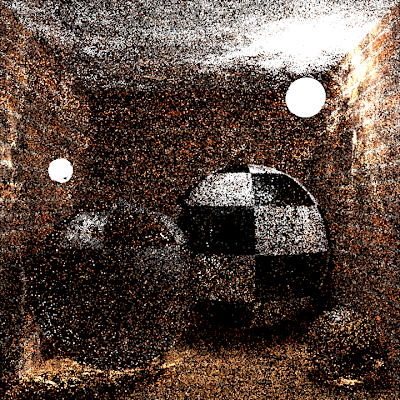

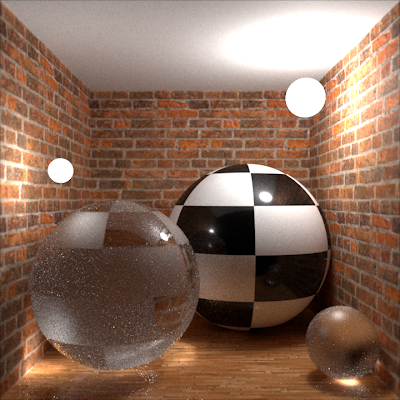

| Kelemen MLT on top of bidirectional path tracing. |

|

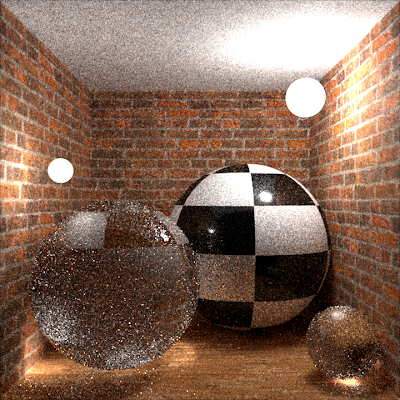

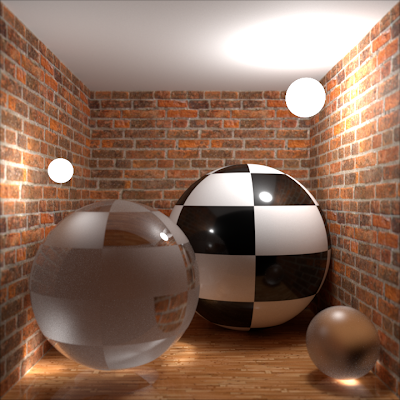

| Bidirectional path tracing without MLT. Same number of samples as the image above. |

|

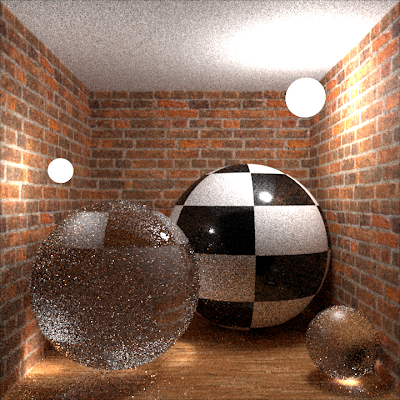

| A longer MLT render. |

|

| High quality bidirectional path tracing reference. |

|

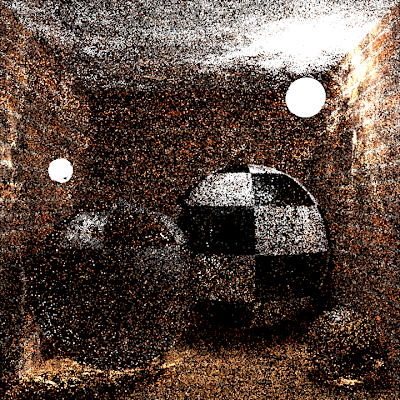

| An MLT render with only local (small step) mutations. This image shows the expected streaky and clumpy artifacts that result from locally exploring the space. |

|

| An MLT render with only global (large step) mutations. Same number of samples as the previous image. |

|

| An MLT render with both types of mutations. Same number of samples as the previous image. |

|

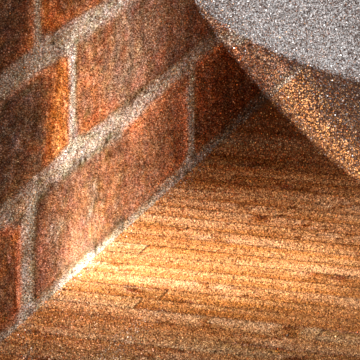

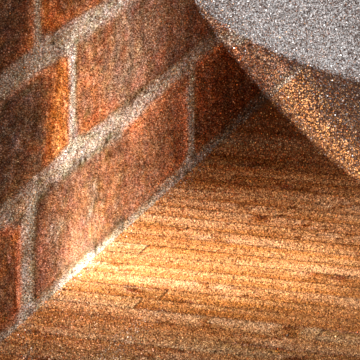

| A close-up of a caustic rendered using MLT. While many parts of the image are noisier than the bidirectional path tracing version below, the bright caustic on the floor and wall are more converged. |

|

| A close-up of a caustic rendered using bidirectional path tracing, with the same number of samples as the image above. |